Does the Super Computer exist?

All previous discussions lead me to conclusion that our Universal Computer is only a part of a grand computing structure that solves even bigger tasks.

The presence of “invisible” Black Holes and breakable bytes of information (molecules, atoms, etc) is evidence to that. All these disappear from our Universe into some places unknown. Obviously, macro- and micro-bits participate in some further computing process we know nothing at all about – not a scintilla! Our Computer stops following that process, but it obviously continues in these invisible parallel worlds.

Computer is akin to a calculator that computes, for example, up to ten grades of figures. We have both the limit of calculating capacity and the limit of data array sizes, which could be processed. But imagine that there are several such calculators, working so that if the first one calculates the biggest possible number, it passes part of its functions over to the next calculator. The second calculator uses these partial calculations in its own tasks. When the second calculator reaches its limits, the third calculator joins in. And such process can go back and forth. The second calculator can compute too small a number and, without rounding it down to zero, re-engage the first one, for the first one to complete the computation.

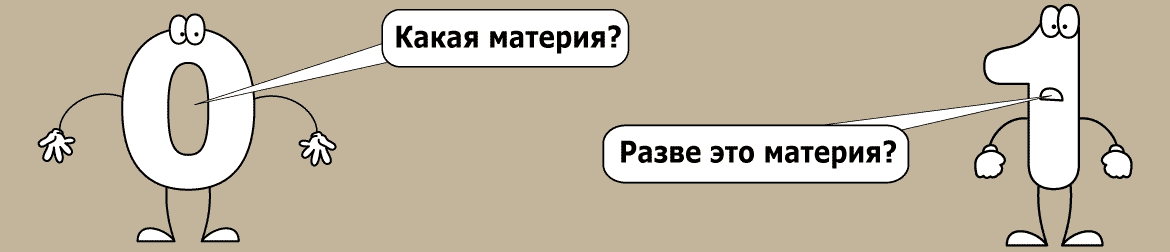

Such chain can be very long – we may only guess how long. Our Computer is, probably, a part of such chain, positioned somewhere in-between. Being the Computer number N, it works within the chain with even larger arrays of data, receiving portions 0 and 1 (bits) from a Computer number N-1. And when we run out of calculating capacity, a Black Hole (macro-unit) is being formed. Here, a Computer number N+1 joins, etc.

The only real proof of the Super Computer’s existence is the excessive size of data arrays. We see clearly only a tip of an iceberg, and not the whole. The whole is visible to someone else – someone who controls all these “minor” Computers. I don’t know what and how is happening there, but I think that on that very level the compressing program (gravitation) runs. Other optimising programs might also be run that level, but that is far from being certain. Combing effect of those programs working at different levels could lead to a macro-unit (the Black Hole) and a micro-bit (micro-particle) being formed in different conditions. It is not at all necessary for one Black Hole’s size being equal to another’s. They could be different; but it is important that they are not “lost” on the Super Computer level. Increasing density of data in compressed files of our Computer does not at all lead to loss of data in a global data array. At a certain point of growth of our data file, the excessive complexity becomes unnecessary, and the file is simplified while approaching the Black Hole state.

The same is happening on the micro-bit level. That is, a bit disappears from our Universe not necessarily in same, but also in different conditions. All depends on work of the higher level programs. It is them that decide which part of a bit can be sent to a level below. What is important is not to lose task continuity. As it turns out, the nuclear physicists have “divided” such a particle “zoo” that nobody could put it into a complete system. I suppose that the problem is linked to discovery of debris of micro-bits, and not the bits themselves. Micro-bits should, in principle, be infinitely stable in the working area of our Computer, and the debris should disappear and appear for a very short time only. We should look for our micro-bit (“indivisible” atom) according to these specifications.

Why then physicists “see” the smaller debris of a micro-bit? Very simply, our slow Computer is to blame. It just does not immediately “stitch” the holes left after the bit’s disappearing. In one single computer clock cycle, it is impossible to fix disappearance of information. And the control lines also start to slow down. Plus the higher Computer optimising program intervenes. So it turns out that hundreds, if not thousands, of Computer clock cycles are required for “annihilation” of a micro-bit. This is the visibility of the invisible.

That time-consuming process creates a tunnel effect. That is when some elementary particles (debris) easily penetrate through dense data arrays. In reality they, of course, do not move at all, just micro-bits are appearing and disappearing. That creates an illusion of movement, as the files change even within that short time slot. A good example of such debris is an “elusive” neutrino, which is really a rare fragment of a micro-bit. It was extremely difficult to catch that particle.

Igor Voroshilov says:

Получил вопрос от Юрия С:

Где по Вашей теории единое информационное поле?

Спасибо Юрий за вопрос!

Мой ответ ниже:

Да, проблема единого информационного пространства (пардон, не поля) действительно актуальна.

В нашей вселенной(секции Глобального компьютера) мы имеем доступ только к части данных, обрабатываемым этим самым Глобальным компьютером.

Для нас массивы данных разрываются в черных дырах и на уровне микрочастиц. Но сами эти массивы остаются едиными и управляются контроллером Глобального компьютера. Мы не можем “видеть” полный цикл обработки данных в силу ограниченных технических возможностей вычислительного ядра нашей вселенной.

Это как заставить старый 32-х разрядный компьютер вести 64-е вычисления.

Без специальных технических или программных средств это сделать не возможно. Старый компьютер, чтобы вести вычисления должен оперировать 32-х разрядными данными. Вот и наша вселенная, как будто этот старый компьютер, работает со специально подготовленными данными.

Глобальной компьютер “подсовывает” эти сформированные массивы данных, чтобы могло работать вычислительное ядро нашей вселенной. Вот почему мы наблюдаем разрывы данных в виде черных дыр и исчезающих микро частиц.

Эти процессы можно назвать линзами разрыва данных. Они искажают общее информационное пространство Глобального компьютера, где информация связанная и непрерывная.

С уважением,

Игорь Ворошилов